1. 软件版本

首先要把centos7系统的内核升级最好4.4以上(默认3.10的内核,运行大规模docker的时候会有bug)

| 软件/系统 | 版本 | 备注 |

|---|---|---|

| Centos | 7.9 | 最小安装版 |

| k8s | 1.15.1 | |

| flannel | 0.11 | |

| etcd | 3.3.10 |

2. 角色分配

| k8s角色 | 主机名 | 节点IP | 备注 |

|---|---|---|---|

| master1+etcd1 | master1.host.com | 10.0.0.70 | master节点 |

| master2+etcd2 | master2.host.com | 10.0.0.71 | |

| master3+etcd3 | master3.host.com | 10.0.0.72 | |

| node1 | node1.host.com | 10.0.0.73 | node节点 |

| node2 | node2.host.com | 10.0.0.74 | |

| haproxy1+keepalived | haproxy1.host.com | 10.0.0.75 | 负载均衡(vip:10.0.0.80) |

| haproxy2+keepalived | haproxy2.host.com | 10.0.0.76 |

3. 安装流程

第1个里程: 初始化工具安装

所有节点都执行

点击查看代码

yum install net-tools vim wget -y

第2个里程: 关闭防火墙与Selinux

所有节点都执行

systemctl stop firewalld

systemctl disable firewalld

sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

reboot

第3个里程: 设置时区

所有节点都执行

cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime -rf

第4个里程: 关闭交换分区

所有节点都执行

swapoff -a

sed -i '/ swap / s/^(.*)$/#1/g' /etc/fstab

第5个里程:设置系统时间同步

所有节点都执行

yum install -y ntpdate

ntpdate -u ntp.aliyun.com

echo "*/5 * * * * ntpdate ntp.aliyun.com >/dev/null 2>&1" >> /etc/crontab

systemctl start crond.service

systemctl enable crond.service

第6个里程: 设置主机名

cat > /etc/hosts 第7个里程: 免密钥登录

master执行脚本即可

yum install sshpass -y

cat > scp.sh /dev/null

if [ $? -eq 0 ];then

echo "${node} 秘钥copy完成"

else

echo "${node} 秘钥copy失败"

fi

done

EOF

第8个里程:优化内核参数

master和node节点

cat >/etc/sysctl.d/kubernetes.conf 第9个里程: 配置高可用

安装keepalived

安装

2台haproxy都要安装

yum install -y keepalived

编写配置文件

haproxy1配置成master

cat >/etc/keepalived/keepalived.confhaproxy2配置成BACKUP

cat >/etc/keepalived/keepalived.conf设置keepalived开机自启动

systemctl start keepalived

systemctl enable keepalived

安装haproxy(2台节点只需要修改对应的地址即可)

安装

1. 上传软件包

root@haproxy1:/usr/local/src# ll

total 3792

drwxr-xr-x 4 root root 96 Jun 9 14:01 ./

drwxr-xr-x 10 root root 140 Jun 9 14:02 ../

drwxrwxr-x 13 root root 4096 Jun 9 14:16 haproxy-2.4.4/

-rw-r--r-- 1 root root 3570069 May 24 10:25 haproxy-2.4.4.tar.gz

drwxr-xr-x 4 1026 ygw 58 Jun 27 2018 lua-5.3.5/

-rw-r--r-- 1 root root 303543 Nov 16 2020 lua-5.3.5.tar.gz

2. 做软连接

ln -s /usr/local/src/lua-5.3.5 /usr/local/lua

ln -s /usr/local/src/haproxy-2.4.4 /usr/local/haproxy

3. 编译安装lua

1)安装依赖

yum install gcc gcc-c++ readline-devel glibc glibc-devel pcre pcre-devel openssl-devel zlib-devel systemd-devel -y

cd /usr/local/lua && make linux

查看编译安装的版本

/usr/local/lua/src/lua -v

Lua 5.3.5 Copyright (C) 1994-2018 Lua.org, PUC-Rio

4. 编译安装haproxy

cd /usr/local/src/haproxy-2.4.4 && make TARGET=linux-glibc USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_SYSTEMD=1 USE_LUA=1 LUA_INC=/usr/local/lua/src/ LUA_LIB=/usr/local/lua/src/ && make install PREFIX=/apps/haproxy

5. 方便命令的调用

ln -s /apps/haproxy/sbin/haproxy /usr/sbin/

6. 查看版本

haproxy -v

编写haproxy配置文件

mkdir /etc/haproxy

cat >/etc/haproxy/haproxy.cfg编写haproxy的service文件

cat >/etc/systemd/system/haproxy.service设置用户和目录权限

mkdir /var/lib/haproxy

useradd -r -s /sbin/nologin -d /var/lib/haproxy haproxy

设置haproxy开机自启动

systemctl daemon-reload

systemctl start haproxy

systemctl enable haproxy

第10个里程: 配置证书

下载自签名证书生成工具

Master1上操作

mkdir /soft && cd /soft

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

生成ETCD证书

创建目录(master1)

mkdir /root/etcd && cd /root/etcd

证书配置

Master1

cd /root/etcd

cat 创建CA证书请求文件

cd /root/etcd

cat 创建ETCD证书请求文件

可以把所有的master IP 加入到csr文件中(Master1上执行)

cd /root/etcd

cat

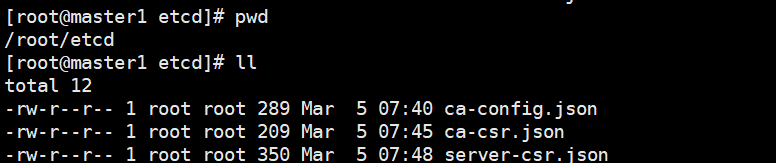

生成 ETCD CA 证书和ETCD公私钥(Master-1上执行)

cd /root/etcd/

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@master1 etcd]# ll

total 24

-rw-r--r-- 1 root root 289 Mar 5 07:40 ca-config.json #ca 的配置文件

-rw-r--r-- 1 root root 956 Mar 5 07:51 ca.csr #ca 证书生成文件

-rw-r--r-- 1 root root 209 Mar 5 07:45 ca-csr.json #ca 证书请求文件

-rw------- 1 root root 1679 Mar 5 07:51 ca-key.pem #ca 证书key

-rw-r--r-- 1 root root 1265 Mar 5 07:51 ca.pem #ca 证书

-rw-r--r-- 1 root root 350 Mar 5 07:48 server-csr.json

生成etcd证书(Master-1)

cd /root/etcd/

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@master1 etcd]# ll

total 36

-rw-r--r-- 1 root root 289 Mar 5 07:40 ca-config.json

-rw-r--r-- 1 root root 956 Mar 5 07:51 ca.csr

-rw-r--r-- 1 root root 209 Mar 5 07:45 ca-csr.json

-rw------- 1 root root 1679 Mar 5 07:51 ca-key.pem

-rw-r--r-- 1 root root 1265 Mar 5 07:51 ca.pem

-rw-r--r-- 1 root root 1086 Mar 5 07:54 server.csr

-rw-r--r-- 1 root root 350 Mar 5 07:48 server-csr.json

-rw------- 1 root root 1679 Mar 5 07:54 server-key.pem #etcd客户端使用

-rw-r--r-- 1 root root 1415 Mar 5 07:54 server.pem

创建 Kubernetes 相关证书

此证书用于Kubernetes节点直接的通信, 与之前的ETCD证书不同. (Master-1)

创建目录(Master-1)

mkdir /root/kubernetes/ && cd /root/kubernetes/

配置ca 文件(Master-1)

cd /root/kubernetes/

cat 创建ca证书申请文件(Master-1)

cd /root/kubernetes/

cat 生成API SERVER证书申请文件(Master-1)

cd /root/kubernetes/

cat 创建 Kubernetes Proxy 证书申请文件(Master-1)

cd /root/kubernetes/

cat 生成 kubernetes CA 证书和公私钥

生成ca证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@master1 kubernetes]# ll

total 28

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

生成 api-server 证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

[root@master1 kubernetes]# ll

total 40

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw-r--r-- 1 root root 1419 Mar 5 08:25 server.csr

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

-rw------- 1 root root 1679 Mar 5 08:25 server-key.pem

-rw-r--r-- 1 root root 1785 Mar 5 08:25 server.pem

生成 kube-proxy 证书(Master-1)

[root@master1 kubernetes]# pwd

/root/kubernetes

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@master1 kubernetes]# ll

total 52

-rw-r--r-- 1 root root 296 Mar 5 07:58 ca-config.json

-rw-r--r-- 1 root root 1001 Mar 5 08:23 ca.csr

-rw-r--r-- 1 root root 264 Mar 5 08:02 ca-csr.json

-rw------- 1 root root 1679 Mar 5 08:23 ca-key.pem

-rw-r--r-- 1 root root 1359 Mar 5 08:23 ca.pem

-rw-r--r-- 1 root root 1009 Mar 5 08:27 kube-proxy.csr

-rw-r--r-- 1 root root 230 Mar 5 08:23 kube-proxy-csr.json

-rw------- 1 root root 1679 Mar 5 08:27 kube-proxy-key.pem

-rw-r--r-- 1 root root 1403 Mar 5 08:27 kube-proxy.pem

-rw-r--r-- 1 root root 1419 Mar 5 08:25 server.csr

-rw-r--r-- 1 root root 681 Mar 5 08:21 server-csr.json

-rw------- 1 root root 1679 Mar 5 08:25 server-key.pem

-rw-r--r-- 1 root root 1785 Mar 5 08:25 server.pem

第11个里程: 部署ETCD

下载etcd二进制安装文件(所有master)

mkdir -p /soft && cd /soft

wget https://github.com/etcd-io/etcd/releases/download/v3.3.10/etcd-v3.3.10-linux-amd64.tar.gz

tar -xvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64/

cp etcd etcdctl /usr/local/bin/

编辑etcd配置文件(所有master)

注意修改每个节点的ETCD_NAME

注意修改每个节点的监听地址

mkdir -p /etc/etcd/{cfg,ssl}

cat >/etc/etcd/cfg/etcd.confmkdir -p /etc/etcd/{cfg,ssl}

cat >/etc/etcd/cfg/etcd.confmkdir -p /etc/etcd/{cfg,ssl}

cat >/etc/etcd/cfg/etcd.conf创建ETCD的系统启动服务(所有master)

cat > /usr/lib/systemd/system/etcd.service复制etcd证书到指定目录(master1)

cp /root/etcd/*pem /etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.71:/etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.72:/etc/etcd/ssl/

拷贝到node节点上要现在node上创建相关目录mkdir -p /etc/etcd/{cfg,ssl}

scp /etc/etcd/ssl/* 10.0.0.73:/etc/etcd/ssl/

scp /etc/etcd/ssl/* 10.0.0.74:/etc/etcd/ssl/

启动etcd (master节点)

systemctl start etcd

systemctl enable etcd

systemctl status etcd

启动的时候master1会先挂起

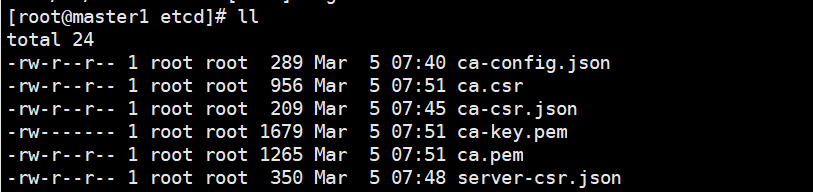

检查etcd 集群是否运行正常

etcdctl --ca-file=/etc/etcd/ssl/ca.pem --cert-file=/etc/etcd/ssl/server.pem --key-file=/etc/etcd/ssl/server-key.pem --endpoints="https://10.0.0.70:2379" cluster-health

member 55829f95b702c087 is healthy: got healthy result from https://10.0.0.71:2379

member b1f1be65c0a2eb31 is healthy: got healthy result from https://10.0.0.72:2379

member b5d8162db028bc4e is healthy: got healthy result from https://10.0.0.70:2379

cluster is healthy

第12个里程: 所有node节点安装docker

参考docker安装文档

第13个里程: 创建Docker所需分配POD 网段(任意master节点)

etcdctl --ca-file=/etc/etcd/ssl/ca.pem

--cert-file=/etc/etcd/ssl/server.pem --key-file=/etc/etcd/ssl/server-key.pem

--endpoints="https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379"

set /coreos.com/network/config

'{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

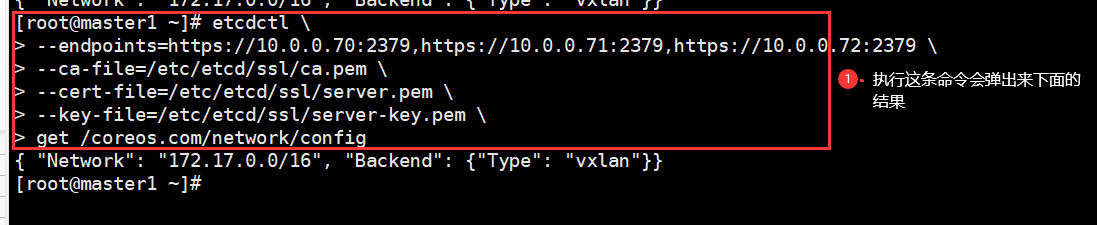

检查

etcdctl

--endpoints=https://10.0.0.70:2379,https://10.0.0.71:2379,https://10.0.0.72:2379

--ca-file=/etc/etcd/ssl/ca.pem

--cert-file=/etc/etcd/ssl/server.pem

--key-file=/etc/etcd/ssl/server-key.pem

get /coreos.com/network/config

第14个里程: 部署flannel

安装

所有节点都要安装

cd /soft

tar xf flannel-v0.11.0-linux-amd64.tar.gz

mv flanneld mk-docker-opts.sh /usr/local/bin/

拷贝到其他节点上

scp /usr/local/bin/flanneld 10.0.0.71:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.71:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.72:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.72:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.73:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.73:/usr/local/bin

scp /usr/local/bin/flanneld 10.0.0.74:/usr/local/bin

scp /usr/local/bin/mk-docker-opts.sh 10.0.0.74:/usr/local/bin

配置flannel

所有k8s节点

mkdir -p /etc/flannel

cat > /etc/flannel/flannel.cfg编写flannel启动文件

所有k8s节点

cat > /usr/lib/systemd/system/flanneld.service 启动flannel

所有节点

systemctl daemon-reload

systemctl start flanneld.service

systemctl enable flanneld.service

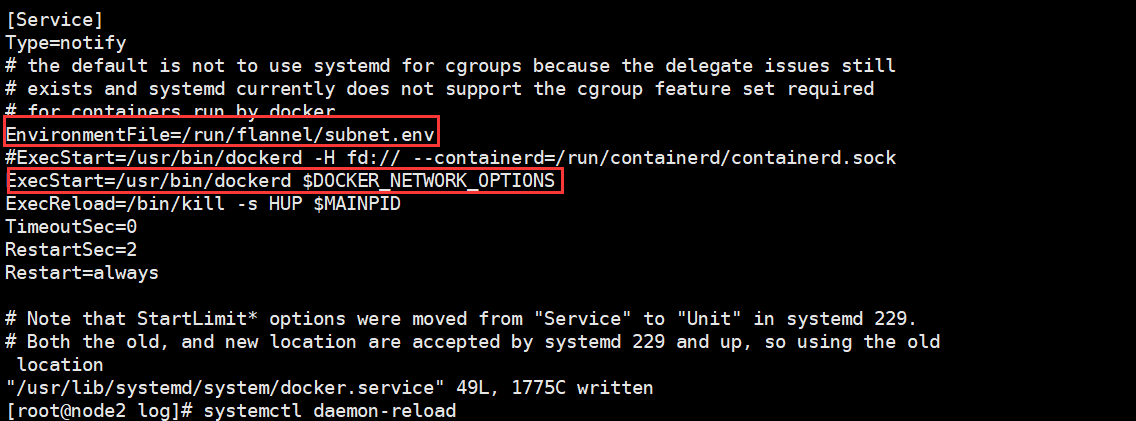

修改docker启动使用flannel的配置

这一步的目的是让docker和flannel运行在同一个网段

systemctl daemon-reload

systemctl restart docker

检查是否和flannel在同一个网段

可以在任意一台master能否ping通172.17.1.1

Node 节点验证是否可以访问其他节点Docker0

第15个里程: 安装Master 组件

Master端需要安装的组件如下:

kube-apiserver

kube-scheduler

kube-controller-manager

安装Api Server服务

下载Kubernetes二进制包(1.15.1)

在master1上解压后传到其它的master上

cd /soft

tar xvf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin/

cp kube-scheduler kube-apiserver kube-controller-manager kubectl /usr/local/bin/

复制执行文件到其他的master节点

scp /usr/local/bin/kube* 10.0.0.71:/usr/local/bin

scp /usr/local/bin/kube* 10.0.0.72:/usr/local/bin

配置Kubernetes证书

Kubernetes各个组件之间通信需要证书,需要复制到每个master节点(master1)

master1

mkdir -p /etc/kubernetes/{cfg,ssl}

cp /root/kubernetes/*.pem /etc/kubernetes/ssl/

master2

mkdir -p /etc/kubernetes/{cfg,ssl}

master3

mkdir -p /etc/kubernetes/{cfg,ssl}

复制到其他的节点

scp /etc/kubernetes/ssl/* 10.0.0.71:/etc/kubernetes/ssl

scp /etc/kubernetes/ssl/* 10.0.0.72:/etc/kubernetes/ssl

创建 TLS Bootstrapping Token(这里记录是告诉其作用,实际操作是下面的一步)

TLS bootstrapping 功能就是让 kubelet 先使用一个预定的低权限用户连接到 apiserver,然后向 apiserver 申请证书,kubelet 的证书由 apiserver 动态签署

Token可以是任意的包含128 bit的字符串,可以使用安全的随机数发生器生成

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

编辑Token 文件(所有master)

f89a76f197526a0d4bc2bf9c86e871c3:随机字符串,自定义生成; kubelet-bootstrap:用户名; 10001:UID; system:kubelet-bootstrap:用户组

cat > /etc/kubernetes/cfg/token.csv 创建Apiserver配置文件(所有的master节点)

配置文件内容基本相同, 如果有多个节点, 那么需要修改IP地址即可

cat >/etc/kubernetes/cfg/kube-apiserver.cfg 配置kube-apiserver 启动文件(所有的master节点)

cat >/usr/lib/systemd/system/kube-apiserver.service启动kube-apiserver服务(所有master)

systemctl daemon-reload

systemctl start kube-apiserver.service

systemctl enable kube-apiserver.service

检查

查看加密的端口是否已经启动

[root@master1 ssl]# netstat -anltup | grep 6443

tcp6 0 0 :::6443 :::* LISTEN 49473/kube-apiserve

tcp6 0 0 ::1:43658 ::1:6443 ESTABLISHED 49473/kube-apiserve

tcp6 0 0 ::1:6443 ::1:43658 ESTABLISHED 49473/kube-apiserve

查看加密的端口是否已经启动(node节点)

[root@node1 ~]# telnet 10.0.0.70 6443

Trying 10.0.0.70...

Connected to 10.0.0.70.

Escape character is '^]'.

第16个里程: 部署kube-scheduler 服务

创建kube-scheduler配置文件(所有的master节点)

cat >/etc/kubernetes/cfg/kube-scheduler.cfg创建kube-scheduler 启动文件(所有master)

cat >/usr/lib/systemd/system/kube-scheduler.service启动kube-scheduler服务(所有的master节点)

systemctl daemon-reload

systemctl start kube-scheduler.service

systemctl enable kube-scheduler.service

查看Master节点组件状态(任意一台master)

[root@master3 ssl]# kubectl get cs

NAME STATUS MESSAGE ERROR

#这里是因为这个服务还没有安装

controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: connect: connection refused

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

第17个里程: 部署kube-controller-manager

创建kube-controller-manager配置文件(所有master节点)

cat >/etc/kubernetes/cfg/kube-controller-manager.cfg创建kube-controller-manager 启动文件(所有master节点)

cat >/usr/lib/systemd/system/kube-controller-manager.service启动kube-controller-manager服务(所有master节点)

systemctl daemon-reload

systemctl start kube-controller-manager.service

systemctl enable kube-controller-manager.service

systemctl status kube-controller-manager.service

查看Master 节点组件状态

必须要在各个节点组件正常的情况下, 才去部署Node节点组件

[root@master1 ssl]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

第18个里程: 部署Node节点组件

部署 kubelet 组件

从Master节点复制kubelet和kube-proxy 执行文件到Node

拷贝到node1

scp /soft/kubernetes/server/bin/kubelet 10.0.0.73:/usr/local/bin/

scp /soft/kubernetes/server/bin/kube-proxy 10.0.0.73:/usr/local/bin/

拷贝到node2

scp /soft/kubernetes/server/bin/kubelet 10.0.0.74:/usr/local/bin/

scp /soft/kubernetes/server/bin/kube-proxy 10.0.0.74:/usr/local/bin/

创建kubelet bootstrap.kubeconfig 文件

Maste1节点

mkdir /root/config ; cd /root/config

cat >/root/config/environment.sh创建kube-proxy kubeconfig文件(master-1)

cat >/root/config/env_proxy.sh复制kubeconfig文件与证书到所有Node节点

将bootstrap kubeconfig kube-proxy.kubeconfig 文件复制到所有Node节点

先在node节点上创建目录

mkdir -p /etc/kubernetes/{cfg,ssl}

复制证书文件ssl(master1)

scp /etc/kubernetes/ssl/* 10.0.0.73:/etc/kubernetes/ssl/

scp /etc/kubernetes/ssl/* 10.0.0.74:/etc/kubernetes/ssl/

复制kubeconfig文件(master1)

拷贝到node1

scp /root/config/bootstrap.kubeconfig 10.0.0.73:/etc/kubernetes/cfg

scp /root/config/kube-proxy.kubeconfig 10.0.0.73:/etc/kubernetes/cfg

拷贝到node2

scp /root/config/bootstrap.kubeconfig 10.0.0.74:/etc/kubernetes/cfg

scp /root/config/kube-proxy.kubeconfig 10.0.0.74:/etc/kubernetes/cfg

创建kubelet参数配置文件

不同的Node节点, 需要修改IP地址 (node节点操作)

cat >/etc/kubernetes/cfg/kubelet.configcat >/etc/kubernetes/cfg/kubelet.config创建kubelet配置文件

不同的Node节点, 需要修改IP地址

cat >/etc/kubernetes/cfg/kubeletcat >/etc/kubernetes/cfg/kubelet创建kubelet系统启动文件(所有node节点)

cat >/usr/lib/systemd/system/kubelet.service将kubelet-bootstrap用户绑定到系统集群角色

master1节点操作

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

启动kubelet服务(所有node节点)

systemctl daemon-reload

systemctl start kubelet.service

systemctl enable kubelet.service

systemctl status kubelet.service

服务端批准与查看CSR请求

Maste1节点操作

[root@master1 ~]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-I81WpTLkI1GcJL1RN_7AsH2gDtqkRuIGb9Cvkzktg00 23s kubelet-bootstrap Pending

node-csr-vaqrhpHnGVhoa6lFp3ADkZxKtcZLYFEbuXbJ1r9AtrM 13s kubelet-bootstrap Pending

批准请求

Master节点操作

kubectl certificate approve node-csr-I81WpTLkI1GcJL1RN_7AsH2gDtqkRuIGb9Cvkzktg00

kubectl certificate approve node-csr-vaqrhpHnGVhoa6lFp3ADkZxKtcZLYFEbuXbJ1r9AtrM

查看节点状态

[root@master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

10.0.0.73 Ready 5s v1.15.1

10.0.0.74 Ready 32m v1.15.1

部署kube-proxy 组件

kube-proxy 运行在所有Node节点上, 监听Apiserver 中 Service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡

创建kube-proxy配置文件

注意修改hostname-override地址, 不同的节点则不同

cat >/etc/kubernetes/cfg/kube-proxycat >/etc/kubernetes/cfg/kube-proxy创建kube-proxy systemd unit 文件(所有node节点)

cat >/usr/lib/systemd/system/kube-proxy.service启动kube-proxy 服务

systemctl daemon-reload

systemctl start kube-proxy.service

systemctl enable kube-proxy.service

systemctl status kube-proxy.service

运行Demo项目检测(任意master执行即可)

[root@master1 ~]# kubectl run nginx --image=nginx --replicas=2

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

deployment.apps/nginx created

[root@master1 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

default nginx-7bb7cd8db5-8b5gs 0/1 ContainerCreating 0 7s

default nginx-7bb7cd8db5-dzc5q 0/1 ContainerCreating 0 7s

#获取容器IP与运行节点

[root@master1 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7bb7cd8db5-8b5gs 1/1 Running 0 56s 172.17.1.2 10.0.0.74

nginx-7bb7cd8db5-dzc5q 1/1 Running 0 56s 172.17.46.2 10.0.0.73

#创建容器svc端口

[root@master1 ~]# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# 查看SVC

[root@master1 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 443/TCP 125m

nginx NodePort 192.168.0.147 88:39073/TCP 11s

# 访问node节点上的pod

[root@master1 ~]# curl http://10.0.0.73:39073

[root@master1 ~]# curl http://10.0.0.74:39073

删除demon项目

kubectl delete deployment nginx

kubectl delete pods nginx

kubectl delete svc -l run=nginx

第19个里程: 部署DNS

把镜像先传到node节点,然后倒入镜像

docker load -i coredns1.0.6.tar

master1上应用yml文件

kubectl apply -f coredns-1.0.6.yml

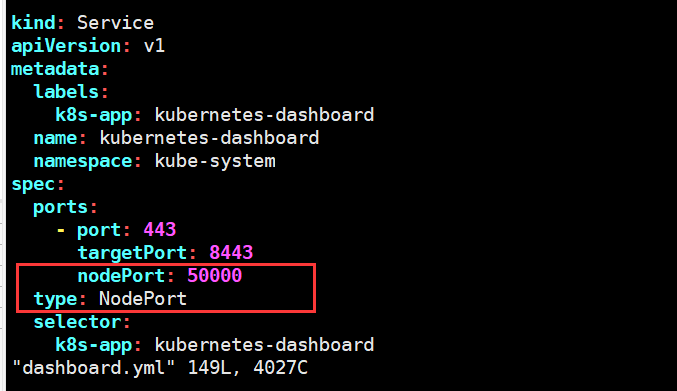

第20个里程: 部署dashboard

部署

下载yml文件以后,修改一下如下地方

访问的时候前面地址加上https:节点ip:50000

创建用户授权

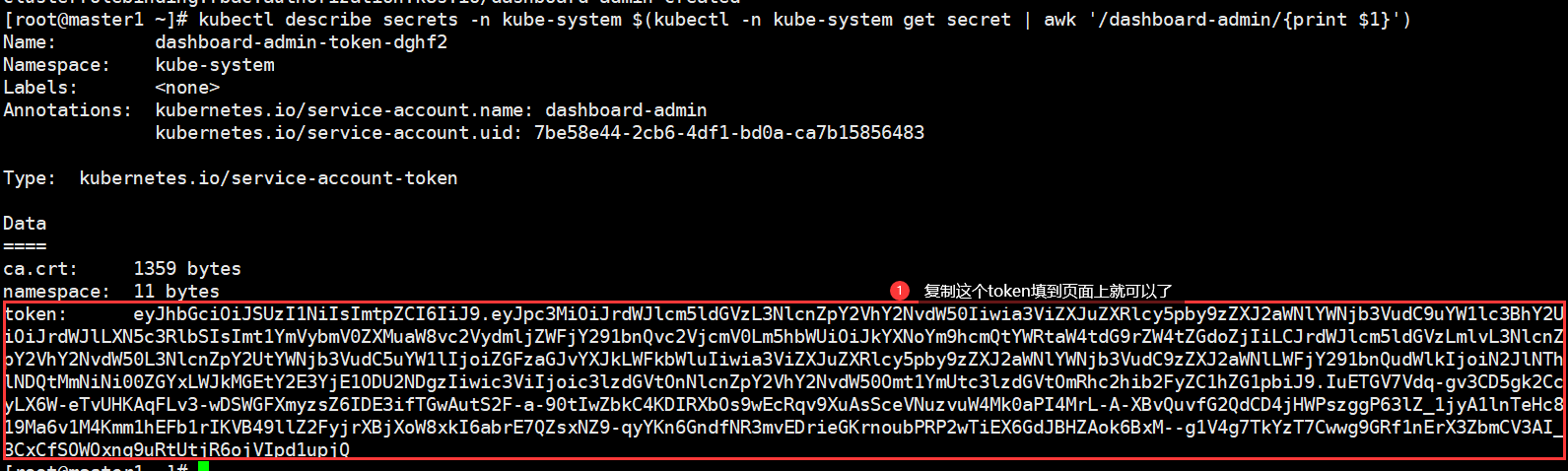

master上执行(任意一个master节点)

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

获取Token

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

第21个里程: 部署Traefik 2.0

创建 traefik-crd.yaml

crd资源不区分命名空间

mkdir /root/ingress && cd /root/ingress

[root@master1 ingress]# cat traefik-crd.yaml

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ingressroutes.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: IngressRoute

plural: ingressroutes

singular: ingressroute

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: ingressroutetcps.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: IngressRouteTCP

plural: ingressroutetcps

singular: ingressroutetcp

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: middlewares.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: Middleware

plural: middlewares

singular: middleware

---

apiVersion: apiextensions.k8s.io/v1beta1

kind: CustomResourceDefinition

metadata:

name: tlsoptions.traefik.containo.us

spec:

scope: Namespaced

group: traefik.containo.us

version: v1alpha1

names:

kind: TLSOption

plural: tlsoptions

singular: tlsoption

创建

kubectl apply -f traefik-crd.yaml

检查

[root@master1 ingress]# kubectl get crd

NAME CREATED AT

ingressroutes.traefik.containo.us 2023-03-11T01:29:34Z

ingressroutetcps.traefik.containo.us 2023-03-11T01:29:34Z

middlewares.traefik.containo.us 2023-03-11T01:29:34Z

tlsoptions.traefik.containo.us 2023-03-11T01:29:34Z

创建Traefik RBAC文件

rbac区分命名空间

[root@master1 ingress]# cat traefik-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

namespace: kube-system

name: traefik-ingress-controller

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

rules:

- apiGroups: [""]

resources: ["services","endpoints","secrets"]

verbs: ["get","list","watch"]

- apiGroups: ["extensions"]

resources: ["ingresses"]

verbs: ["get","list","watch"]

- apiGroups: ["extensions"]

resources: ["ingresses/status"]

verbs: ["update"]

- apiGroups: ["traefik.containo.us"]

resources: ["middlewares"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["ingressroutes"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["ingressroutetcps"]

verbs: ["get","list","watch"]

- apiGroups: ["traefik.containo.us"]

resources: ["tlsoptions"]

verbs: ["get","list","watch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

kubectl apply -f traefik-rbac.yaml

创建Traefik ConfigMap

[root@master1 ingress]# cat traefik-config.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: traefik-config

namespace: kube-system

data:

traefik.yaml: |-

serversTransport:

insecureSkipVerify: true

api:

insecure: true

dashboard: true

debug: true

metrics:

prometheus: ""

entryPoints:

web:

address: ":80"

websecure:

address: ":443"

providers:

kubernetesCRD: ""

log:

filePath: ""

level: error

format: json

accessLog:

filePath: ""

format: json

bufferingSize: 0

filters:

retryAttempts: true

minDuration: 20

fields:

defaultMode: keep

names:

ClientUsername: drop

headers:

defaultMode: keep

names:

User-Agent: redact

Authorization: drop

Content-Type: keep

创建

kubectl apply -f traefik-config.yaml

检查

[root@master1 ingress]# kubectl get configmap -n kube-system

NAME DATA AGE

coredns 1 14h

extension-apiserver-authentication 1 15h

kubernetes-dashboard-settings 1 13h

traefik-config 1 4s

节点设置label标签

由于是 Kubernetes DeamonSet 这种方式部署 Traefik,所以需要提前给节点设置 Label,这样当程序部署时 Pod 会自动调度到设置 Label 的节点上

kubectl label nodes 192.168.3.203 IngressProxy=true

查看标签是否成功

[root@master1 ingress]# kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

192.168.3.203 Ready 13h v1.15.1 IngressProxy=true,beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=192.168.3.203,kubernetes.io/os=linux

192.168.3.204 Ready 13h v1.15.1 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=192.168.3.204,kubernetes.io/os=linux

注意每个Node节点的80与443端口不能被占用

netstat -antupl | grep -E "80|443"

创建 traefik 部署文件

上面打了节点标签,它只会到到指定的节点上面,即使是用的daemonset

[root@master1 ingress]# cat traefik-deploy.yaml

apiVersion: v1

kind: Service

metadata:

name: traefik

namespace: kube-system

spec:

ports:

- name: web

port: 80

- name: websecure

port: 443

- name: admin

port: 8080

selector:

app: traefik

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: traefik-ingress-controller

namespace: kube-system

labels:

app: traefik

spec:

selector:

matchLabels:

app: traefik

template:

metadata:

name: traefik

labels:

app: traefik

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 1

containers:

- image: traefik:2.0.5

name: traefik-ingress-lb

ports:

- name: web

containerPort: 80

hostPort: 80

- name: websecure

containerPort: 443

hostPort: 443

- name: admin

containerPort: 8080

resources:

limits:

cpu: 2000m

memory: 1024Mi

requests:

cpu: 1000m

memory: 1024Mi

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

args:

- --configfile=/config/traefik.yaml

volumeMounts:

- mountPath: "/config"

name: "config"

volumes:

- name: config

configMap:

name: traefik-config

tolerations:

- operator: "Exists"

nodeSelector:

IngressProxy: "true"

创建

kubectl apply -f traefik-deploy.yaml

查看运行状态

[root@master1 ingress]# kubectl get DaemonSet -A

NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

kube-system traefik-ingress-controller 1 1 0 1 0 IngressProxy=true 18s

Traefik 路由配置

vim traefik-dashboard-route.yaml

apiVersion: traefik.containo.us/v1alpha1

kind: IngressRoute

metadata:

name: traefik-dashboard-route

namespace: kube-system

spec:

entryPoints:

- web

routes:

- match: Host(`ingress.abcd.com`)

kind: Rule

services:

- name: traefik

port: 8080

创建

kubectl apply -f traefik-dashboard-route.yaml

检查

[root@master1 ingress]# kubectl get ingressroute.traefik.containo.us -A

NAMESPACE NAME AGE

kube-system traefik-dashboard-route 78s

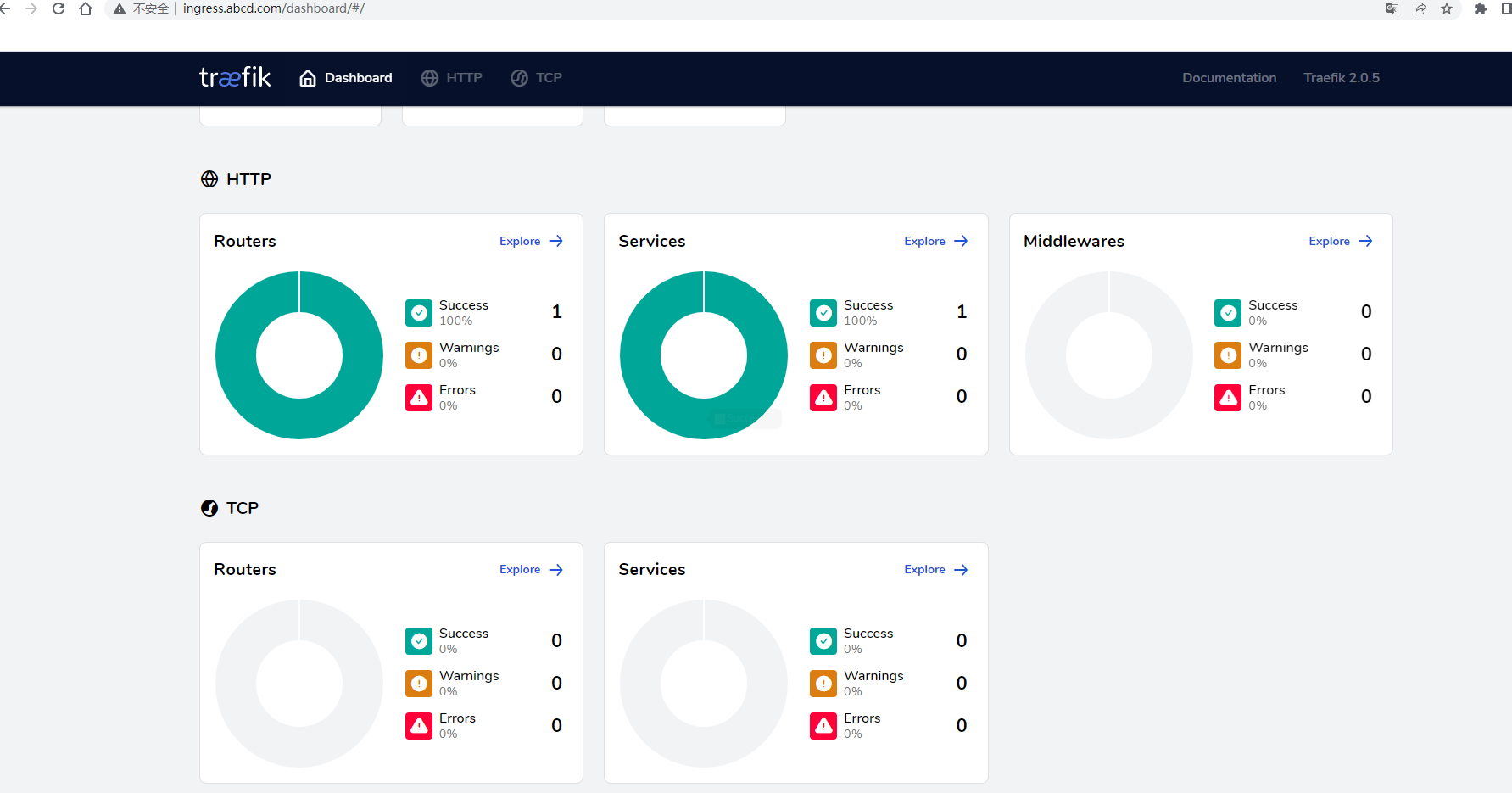

客户端访问Traefik Dashboard

绑定物理主机Hosts文件或者域名解析

/etc/hosts

192.168.3.203 ingress.abcd.com

访问web

机房租用,北京机房托管,大带宽租用,IDC机房服务器主机租用托管-价格及服务咨询 www.e1idc.net